This week-end, I finished reading the articles of the WEIS 2006 (Workshop on the Economics of Information Security) -- I like conferences whose proceedings are available online: I can learn a lot, without spending a penny, even in domains which are not my primary focus.

http://weis2006.econinfosec.org/prog.html

A couple of them were finance-related (I currently work for an asset manager):

- Privacy breaches are a source of information that can help predict the future stock prices of the company -- but only in the short term: the effect vanishes quickly.

- "Information on (yet) undisclosed vulnerabilities" is a traded asset, with three main markets: government agencies, software vendors, underground market.

- Stock spam works: the stocks do exhibit short-term positive returns -- but beware, these are penny stocks, with no liquidity: no one but the spammer can invest in them early enough (the positive returns are actually a consequence of the lack of liquidity when naive spammed people try to invest).

Many focused on cyber-security investment in companies, either providing a mathematical cost-benefit analysis and drawing conclusions or presenting survey results about current practices.

- In most companies, cyber-cecurity investment does not rely on a rigorous cost-benefit analysis process.

- If you do not have much to lose, do not invest in security (at all); if you do, do not invest too much: recovery procedures are also important.

- The fair price of IT security can be as high as the price of the data/systems/processes to be protected.

- Firms are decreasing their security budget to ensure Sarbanes-Oxley (SOX) compliance.

- Systems (software, company networks, etc.) with better levels of protection have stronger incentives to reveal their security characteristics to attackers than poorly protected systems.

- (Small) companies with a TRUSTe certificate are less reliable than those without.

- Software vendors should be held liable for software faults.

- There was also a study of internet outage in manufacturing: the effects depend on what the company does when no information comes in: either produce and ship as usual (fine) or wait for orders (disastrous -- this is the case of the automobile industry)

Graph theory:

- Strategies to make a network resilient to attacks, mainly centrality attacks: split large nodes into rings (does not work) or into cliques (better), delagation (a large node selects two of its neighbours, connects them, and disconnects from one -- does not work), or cliques+delagation (even better).

- When you monitor someone (in a social network), you also monitor the people he interacts with -- conversely, to monitor someone, you can start to monitor the people around him.

There was also a non-graph-theoretic, algorithmic article, about Distributed Constraints Optimization (DCOP), i.e., calendar problems when you do not want to divulge your availability or preferences to the person who need to schedule an appointment with.

A couple of articles focused on privacy issues.

- Opt-out schemes provide higher welfare than anonymity, which is better than opt-in -- but the article stops there. This actually calls for a fourth option, e.g., "privacy-enhanced data mining".

- The opposite of privacy is (price) discrimination.

An article reminded the reader that users exist and are lazy: if you want them to fix problems, fixes should be easy to do and/or low-cost.

An article detailed consequences of lack of interoperability: proprietary storage formats on mobile phones hinder forensic investigations.

An article examined the non-adoption of secure internet protocols (HTTPS, DNSSEC, IPSec, etc.): for people to start using them, they have to already be in use -- they have to be bootstrapped. (HTTPS went fine only because it was imposed by credit card companies, but failed to completely replace HTTP.)

posted at: 19:18 | path: /Misc | permanent link to this entry

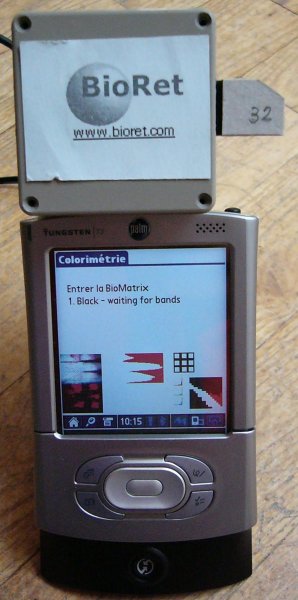

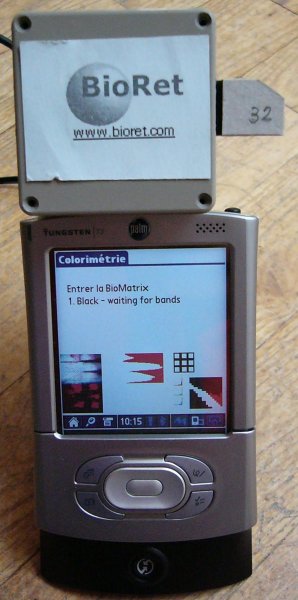

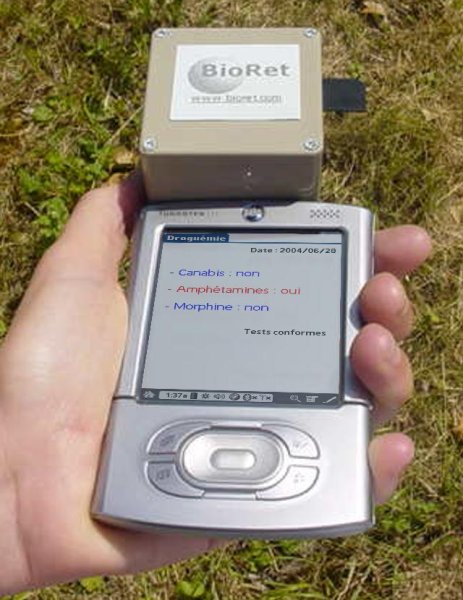

The company I used to work for, BioRet, closed.

http://www.bioret.com/

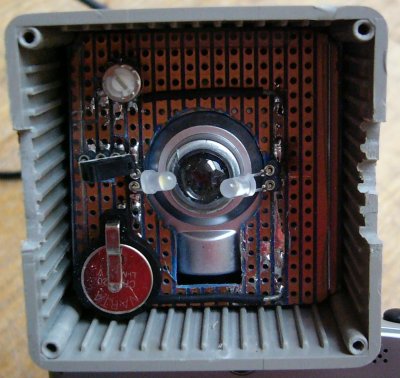

A start-up at the frontier between between biology, electronics and optics, we were designing "biochip readers". One can do biological analyses in the field, one at a time, on in the laboratory, thousands at a tiome: but there was nothing in the middle, no means of easyly performing dozens of tests in the field. The biologists in our team were designing the biochips -- they looked like miniaturized versions of the bands currently used for tests in the field, but the size changes the physico-chemical phenomena. The opto-electronitians were designing the artificial retina (something between the CCD of digital cameras and the processor in a computer, a light captor with computing power -- it is called a CMOS). My work, at the time, was to develop (to program) a proof-of-concept, built from a PDA with a digital camera. We had a small version (only three tests, each done twice, plus three control tests) of a biochip, but no artifical retina had been produced.

Why did we fail?

The project was too innovative -- Entrepreneurs beware: do not try to be innovative in France! (This is not a joke: when we explained our project to potential customers, partners or investors, their immediate reaction was: "why are you so innovative? Why don't you try some more classical, less efficient but less risky methods?".)

The project was at the frontier between several domains, therefore investors, specialized in only one of these domains, had problems understanding, assessing, trusting our ideas.

The project was also too ambitious: developping a chip is probably too ambitious for a start-up.

The prototyping of the application on the PDA to read the biochip was done in R (on Linux): I had implemented a few image processing algorithms ("mathematical morphology"). In case someone is interested, here is the code (distributed under the GPL)

http://zoonek2.free.fr/UNIX/48_R/morphology.R

and here is some documentation (with a lot of pictures, as always), in French.

http://zoonek.free.fr/Ecrits/2004_BioRet.pdf.bz2 http://zoonek2.free.fr/UNIX/48_R/Rapport_final_morphologie.Rnw

posted at: 19:17 | path: /Misc | permanent link to this entry